40 years of MEDICA

No one imagined what we would become

When we organised the first Diagnostic Week in Karlsruhe, in 1969, no one could have known that this event would one day turn into the annual highlight in the world of medicine, reflected Dr Wolfgang Albath, laboratory medicine pioneer and one of the founding fathers of MEDICA the world`s largest medical trade show. Initially planned as a moving exhibition, the show has been based in Düsseldorf since 1972, where 4,000 exhibitors and 137,000 visitors from 70 nations attended last year`s event.

Medica swiftly positioned itself as a forum for training, knowledge and technology transfer and as a specialised exhibition that continually developed and reinvented itself in line with medical progress – all of which underpins its sustained success.

Developments in medical technology and imaging have also transformed the show from an event focused exclusive on laboratory diagnostics.

Whilst laboratory analyses were initially carried out manually and in very small series, mechanisation and automation have advanced greatly, following the launch of the AutoAnalyser in 1960. Based on a system of continuous flow analysis this revolutionised lab diagnostics and paved the way for analysers to work through organ-specific parameters in batches.

The ’70s saw very dynamic developments in immunology, manifested in immunofluorescence to detect auto-antibodies and infectious agents. In the same period, computer and IT developments finally enabled large-scale diagnostic tests and examinations that generated huge amounts of data and administration to become manageable. Today’s selective and flexible laboratory systems can carry out up to 3,000 tests from a range of 120 parameters in 400 primary samples per hour.

New molecular biological technologies in the ’90s resulted in groundbreaking developments and opened new diagnostic opportunities at a picomolar level for virology, microbiology, transfusion medicine, serology, immunology, haematology and medical chemistry. The selective multiplication technology of nucleic acids sequences (PCR) used for this purpose resulted in the development of biochip-technology, now an intrinsic part of clinical-chemical diagnoses and therapy control.

Information technology

One touching Medica memory is of doctors standing amazed in a small room filled with huge computers, when the Medica Media Street was launched in 1987. They never imagined how their own lives would be transformed. Although IT systems only arrived in hospitals in the late ’80s, today clinical and administrative processes are managed via a hospital information system (HIS), for which the clinical workstation is continuously evolving.

Radiology was ripe for innovations. In the mid-80s the first digital image archives (PACS), radiology information systems (RIS) and laboratory information systems arrived – but revealed the interface problem. All systems had to be able to exchange patients’ and administrative data. A University Hospital Palo Alto meeting in ’87 initiated the development of the first version of HL7 (health level), an instruction for the data exchange of applications with the HIS. The standard established itself in almost all hospital applications.

Thanks to faster processor developments in the mid-90s, 3-D reconstruction of CT and MRI images became possible. In ’94 a 3-D reconstruction with ultrasound scanners took almost 15 minutes. Today parents can view their unborn babies’ movements in real time.

Networking technology also drove the distribution of IT systems. Its roots lie in the host terminal relationship. A powerful central host serves the individual terminals, which represent pure input or output machines respectively. The client server architecture only became established through Windows: Applications are installed on clients with high storage and computing capacity, the server connects these with one another and facilitates the exchange of data.

Revolutionary imaging

Imaging procedures have rocketed ahead over the last 40 years. Soon after the X-ray image enhancer was developed in the ’50s, nuclear medicine was born. In the ’60s came ultrasound diagnostics and the invention of the flexible endoscope; in ’70s came the computed tomography (CT) scanner and, in the ’80s, magnetic resonance imaging (MRI). A decade later positron emissions computed tomography (PET) entered nuclear medicine.

Sound waves - Four decades ago the triumph of real-time ultrasound diagnostics began. Two medical disciplines drove ultrasound innovations: obstetrics required the meticulous detection of potential antenatal problems, and cardiology required sharp images of the beating heart. These needs have been fulfilled by ultrasound. Doppler ultrasound, followed by colour coded Duplex ultrasound, provides colour coded blood flow direction and speed.

CT has progressed significantly: much faster machines enable much shorter examination times. Capturing the beating heart and showing the respective anatomy facilitated the development of the CT technology known as spiral CT. Today, multidetector CT technology, with many detector slices, produces entire body images within a few rotations of the X-ray tube.

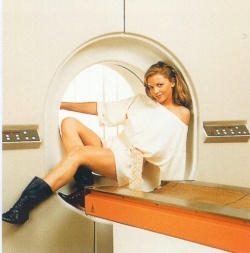

MRI - Three decades after the foundations of today’s medical MRI scanners were laid this radiation-free procedure is still only revealing a small part of its enormous potential. Images combine morphology and functioning of the human organism and it provides a wealth of visible tissue parameters: proton density of tissue, tissue-specific relaxation times and temperature, followed by the functional parameters such as flow (perfusion), diffusion and tissue elasticity. In vivo MR spectroscopy to detect partial metabolic progresses and their metabolites is only just beginning. It is conceivable in principle that MR imaging may be possible with elements other than only the protons of hydrogen in the body.

Nuclear medicine - Positron emission computed tomography (PET) can quantify metabolic processes, such as glucose, fatty acids or amino acid metabolism in vivo. PET can even detect neuro- or oestrogen receptors. Marked metabolites, receptor substances, enzymes and cytostatics have found their way into diagnostics. A particular feature: The function of marked, small molecular metabolic substrates is hardly changed by the interaction with a receptor or another enzyme.

Patient monitoring - In the mid-60s, when people marvelled at moon landings, the first patient monitoring systems arrived in operating theatres and, soon after, beside patients’ bedsides. Since then the standard equipment on intensive care wards has facilitated the monitoring of essential organ functions 24/7. Many of today’s surgical interventions are only possible because intensive technology keeps surgical trauma under control. For example, whereas once rectal carcinoma was considered inoperable in those aged over 65 years, nowadays this is a common operation.

Keyhole surgery - 1969 saw the introduction of endoscopic surgery in gynaecology – initially resisted by surgeons until, in 1985, the first keyhole gallbladder removal was performed. Then, about 15 years ago, came keyhole surgery in the abdomen. Laparoscopic surgery on the ovaries, appendix, uterus and for the removal of adhesions will soon be followed by procedures on the vegetative nervous system, stomach, intestine and probably also the liver.

Lasers - For 30 years the laser has ‘lit up the medical sky’. In ophthalmology, for example, it would now be impossible to imagine work without lasers; from lens removal to the treatment of short-sightedness and long-sightedness, the laser works with precision and is superior to any scalpel. It has become established equally in ear, nose and throat surgery. Whereas in the case of tumours of the upper airways and oesophagus it often had been necessary to remove the entire larynx, for many patients it is now possible to preserve vocal function. Function preserving laser microsurgery is a procedure whereby the laser beam, inserted via mouth or nose, vaporises a tumour layer by layer under microscopic view.

Cardiac devices - Invented 50 years ago and first implanted by cardiologists a decade later: the cardiac pacemaker. In 1983, it became possible to adapt these units to the respective stress situation of individual patients. Since ’79 battery status and electrode function can be checked externally. Today more than 65,000 first implantations and 17,000 replacement implants are carried out annually, and devices can record and relay cardiac functions to physicians via mobiles.

The first cardioverter-defibrillator pacemaker was implanted in 1980. This had an in-built electroshock function to prevent sudden cardiac death. However, deaths from cardiac insufficiency followed, which led to the development of an electrophysiological device for cardiac resynchronisation therapy. This prevents cardiac insufficiency, avoids the need for a heart transplant and reduces mortality significantly.

12.11.2009